Ex Machina

Film

Selected Images

Concept

The idea

The idea initially arises from the intention of placing a medium at the center of the installation—an object capable of existing simultaneously both in the reality of the viewer and in the artificially generated reality of the images. Interaction with it creates a sense of misalignment with reality, catapulting the visitor into the reality of the archive.

After generating various ideas around this theme, we decided to pursue the artificial creation of a religious cult. Religious artifacts, since the dawn of time, have carried with them a legacy of superstitions and beliefs—what would happen if an artifact truly had power?

References

We began reflecting on some references, including The Seven Heavenly Palaces [1]. Anselm Kiefer’s installation evokes a spiritual journey through the ruins of the West and humanity’s aspiration to ascend toward the divine. In a dialogue between memory, matter, and the infinite, the work—inspired by the Sefer Hechalot—transforms space into an initiatory path where past and future converge in a dimension of suspension and spiritual reflection. In our case, the feeling of immensity of Kiefer’s buildings would be replaced by the mystery and curiosity evoked by the three objects.

The Seven Heavenly Palaces, Hanselm Kief

The EM Table [2] explores the dynamic relationship between matter, light, and perception through a system of transparencies, reflections, and movements. The interaction is not static but evolves based on the position of the object and the angle of the light, transforming the table into a device that responds sensitively to environmental variations. Playing with different objects and the movement imparted to them was the key to the interaction of Ex Machina.

Em Table, Jonathan Joanes and Emmanuel Adeusi

The archive

The story behind

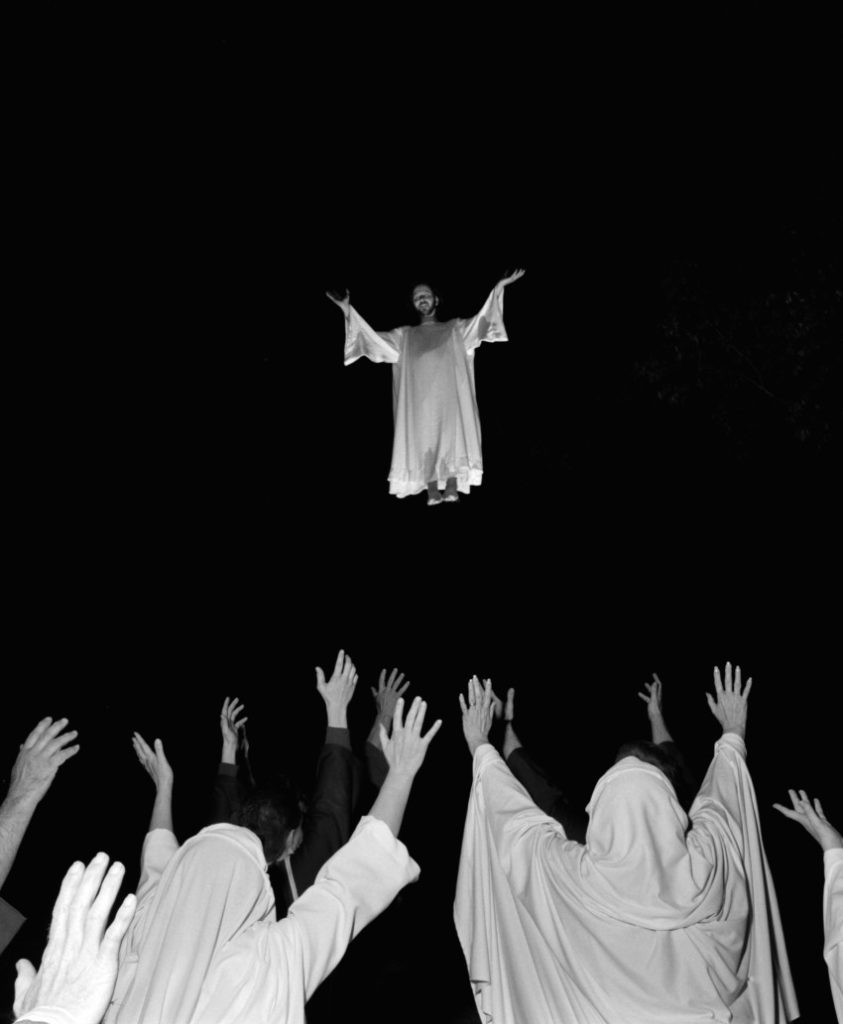

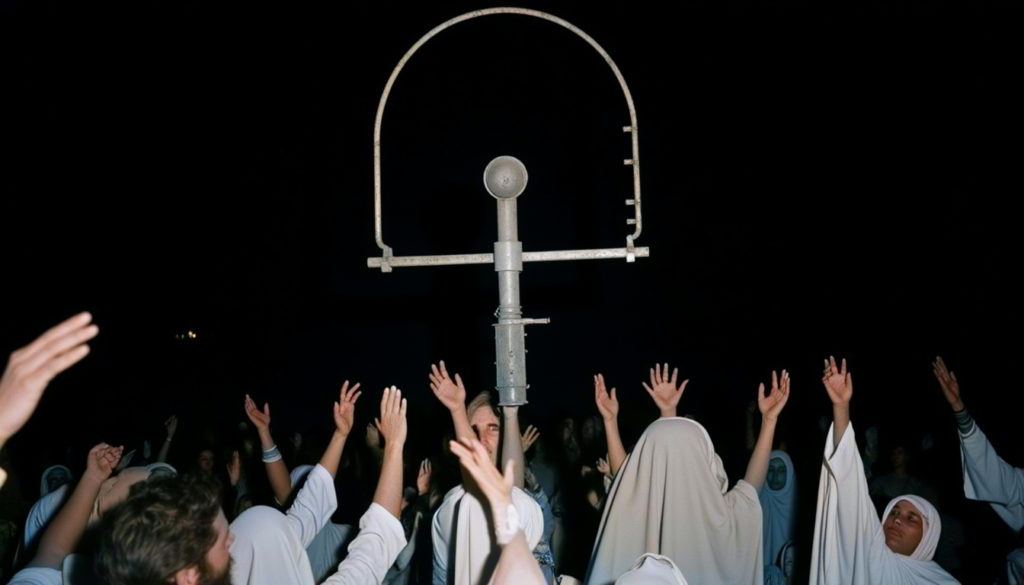

Ex Machina tells the story of a religious cult set in an undefined time, balancing between a past shaped by ancient rituals and a post-industrial future where artificial artifacts become objects of worship.

Each photograph captures a moment in the life of the cult, each archive an aspect of the religion. From acts of intimate contemplation to the frenetic construction of metallic structures, passing through unsettling initiation rites, the cult unfolds before the eyes of those who choose to interact with it.

Three objects exist in our reality, somewhere between archaeological relics and symbols of a possible future, serving as a bridge between reality and the cult’s archives.

Photo references

To generate the images artificially, we explored various references that could help construct the tok for the different objects, ultimately gathering around a hundred images from various existing archives.Our research was divergent, ranging from archives of non-existent cults (The God Machine, Wouter Le Duc [3]) to projects that document religion in its most intimate aspects (The Religious, Anna Shimshak [4]), and photographs capturing religious life in rural communities (The Great Passion Play, Carl De Keyzer [5]). Additionally, we incorporated photographs not directly related to religion but that explore traditions and rituals from different communities (Estroboscopio, Alexandre Del Mar [6]).

The God Machine, Wouter Le Douc

The Religious, Anna Shimshak

Estroboscopio, Alexandre Del Mar

Carl De Keyzer, The Great Passion Play

Archive generation

Through Replicate, we had the opportunity to generate the images. After gathering various reference archives, we organized them into different folders to create distinct tokens based on specific needs. In the end, we developed three main tokens for the three objects, along with two additional ones for high-quality flash photography and close-up shots.

I. Aggregation

Aggregation archive

For the aggregation object, we generated images that convey a sense of chaotic energy—a frenzy driven by the crowd’s collective action to build something. Construction components, arches and metal sheets blend with processions and wild dances, immersing the viewer among the members of the cult.

II. Contemplation

Contemplation archive

For the contemplation object, we generated images that convey a sense of meditation. The cult becomes an intimate expression and a connection with the beyond. Small metallic objects are carried and held close to the body: the figures become solitary, while the close-up photographs depict acts of baptism in the night-time light.

III. Power

Power archive

For the object related to the concept of power, we generated images that tell of a ritual—something we should not have the chance to witness. The photographs become blurred, captured by a blinding flash. A few figures move in the night: they drag masks, move in a disjointed manner and contemplate the darkness.

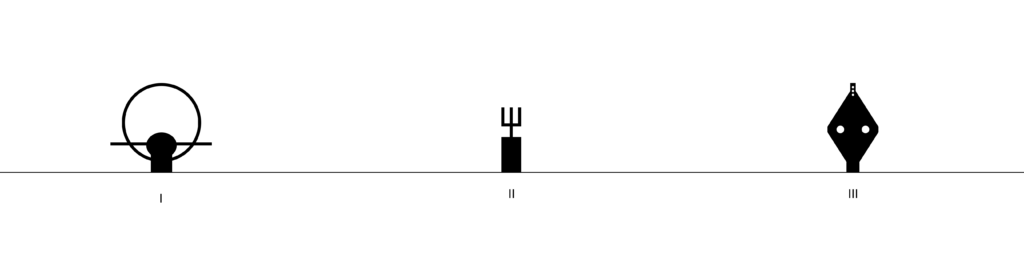

The objects

The process of designing the objects was preceded by a schematization of possible forms and their role within the navigation of the archive. From there, we chose three paths. The first object would be linked to the concept of aggregation, the second to contemplation, and the third to power—three themes closely tied to the notion of worship.

At the same time, we began collecting various references and built a dataset containing design objects, tribal masks, and religious artifacts. Then, using the constructed dataset, we trained a Replicate model to generate images of the objects themselves. This choice allowed artificial intelligence to play an active role from the very beginning of the design process, further blurring the boundary between the real and the artificial.

AI generated images

Subsequently, we used a second AI tool, Trellis, to obtain the 3D mesh of the chosen images. Our idea of 3D printing the artificially generated mesh encountered limitations due to the program’s current inability to achieve a sufficiently high level of model refinement. Additionally, we needed to internally engineer each object to accommodate the electronic components within them. For these reasons we decided to model each object from scratch using Autodesk Fusion 360 to ensure maximum control. Nevertheless, Trellis proved to be a powerful tool for quickly visualizing an early 3D version of the object.

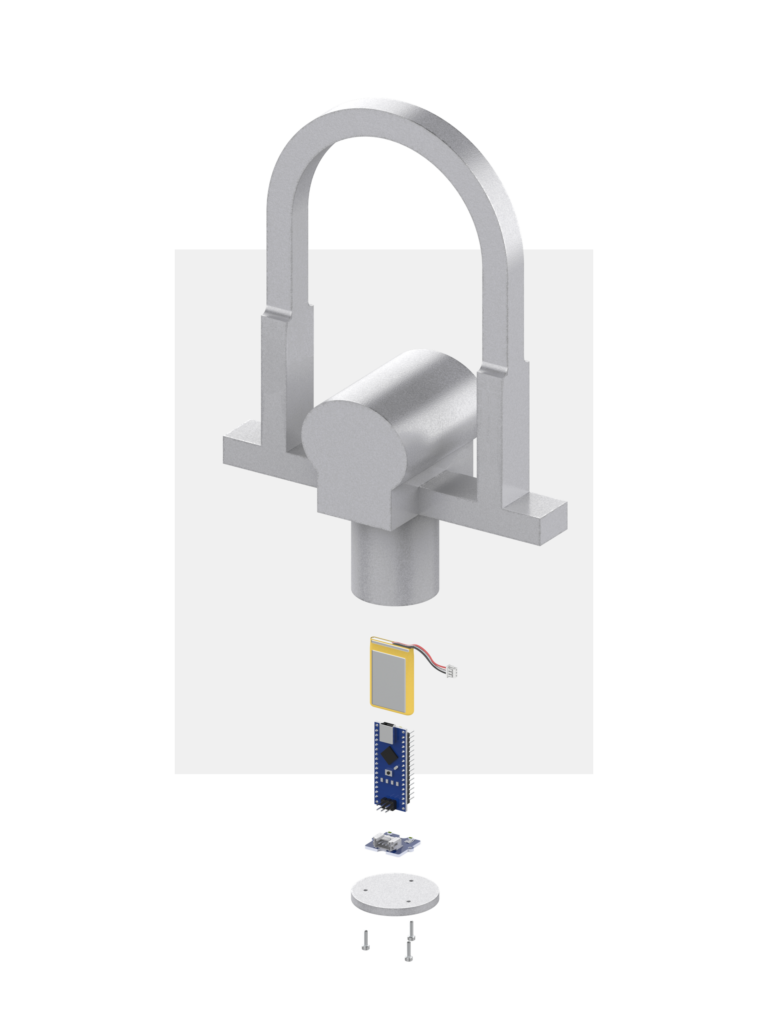

I. Aggregation

AI image (generated on Replicate)

AI 3D mesh (generated on Trellis)

3D model

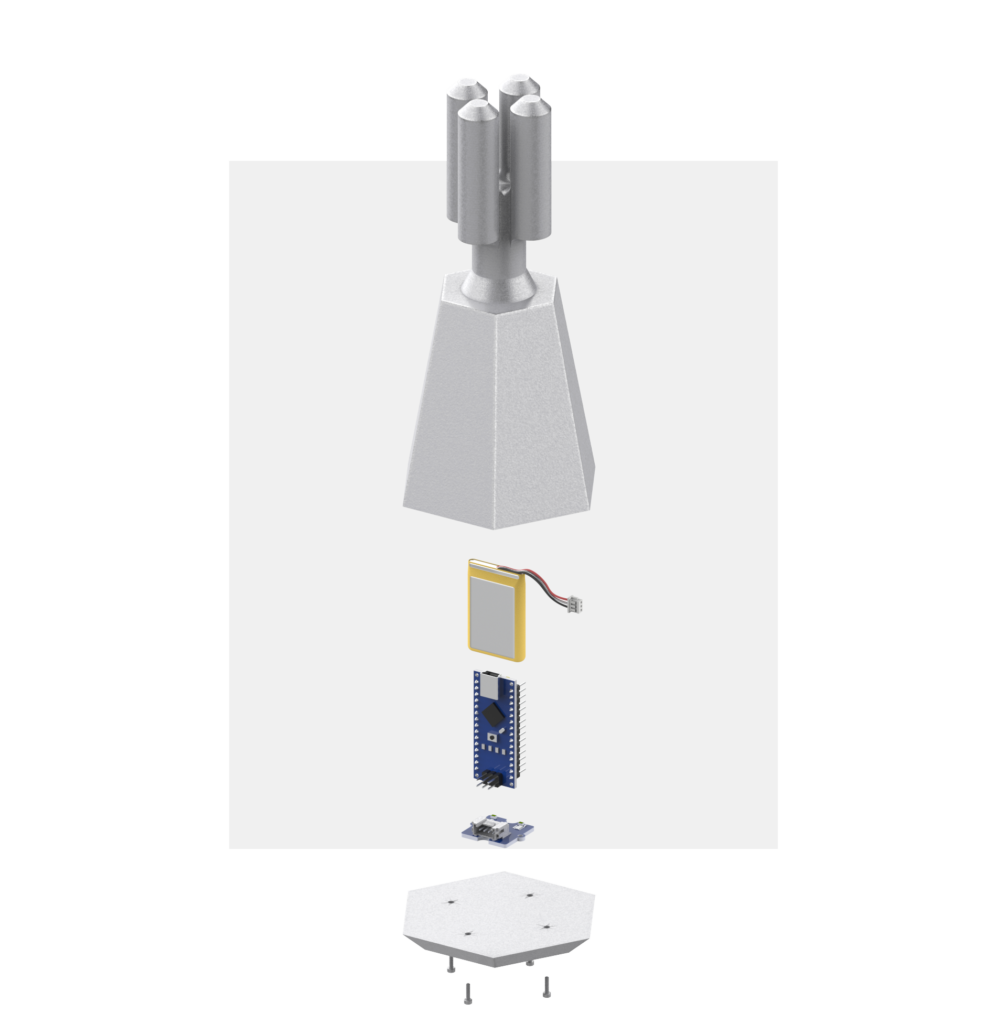

II. Contemplation

AI image (generated on Replicate)

AI 3D mesh (generated on Trellis)

3D model

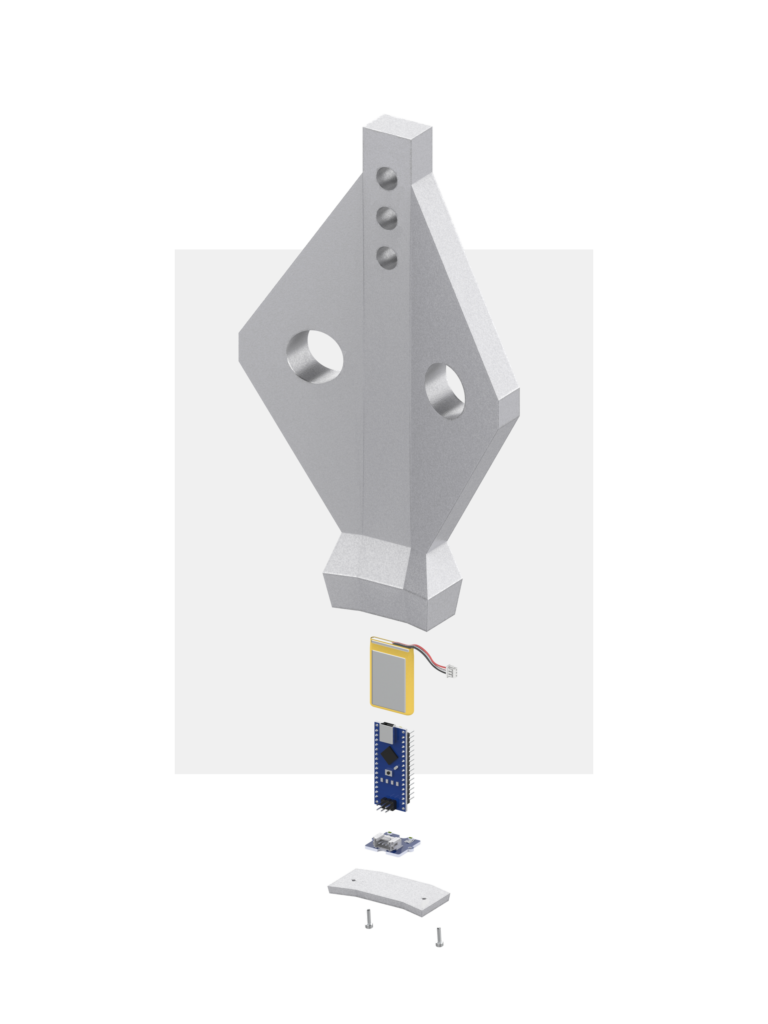

III. Power

AI image (generated on Replicate)

AI 3D mesh (generated on Trellis)

3D model

Prototyping

We started prototyping the objects by experimenting with different finishing techniques for 3D printing, aiming to achieve the effect of an artifact that was not entirely perfect but showed signs of wear and use. To this end, we decided to hand-fill the 3D-printed object and then finish it with a metallic paint. The process was therefore conceptually the same as the design phase: a digital artifact that is subsequently worked on and refined by human hands.

1:5 prototype (galvanized paint)

1:5 prototype (chromed paint)

Mask prototypes

Thanks to the choice of modeling the final objects with Fusion 360, we were able to have full control over their dimensions. This allowed us to define the space needed to house the electronics required for the interaction with the archive.

The interaction

The exhibition features a projector that presents three different archives of images generated by artificial intelligence, each representing a distinct concept: aggregation, contemplation, and power—all revolving around the central theme of the cult. These three ideas are materialized through three different objects that visitors can pick up and move to interact with the image archives.

When a visitor picks up an object, the corresponding archive becomes visible on the wall, accompanied by evocative sounds. Picking up a second or third object splits the screen, displaying multiple archives simultaneously. The images appear one after the other at a speed determined by the object’s inclination: the more horizontal it is, the faster the archive progresses, and the higher the volume of the accompanying sounds.

The first step in developing the interaction was breaking down the technical requirements into smaller tasks, starting with the physical interaction, followed by the on-screen web interaction, and finally the sound design.

All these different components communicate through a Supabase database.

The database

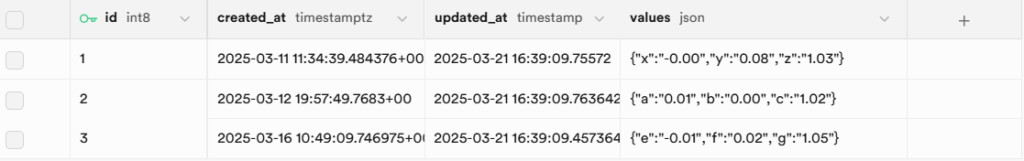

We set up a database using Supabase with a table that can be updated and read in real time. The table consists of three rows, each corresponding to one of the objects, collecting their data and storing it in a structured JSON format.

A screenshot of the database's table structure

The physical interaction

The main interactions are picking up the object and manipulating it, as schematized in the image above.

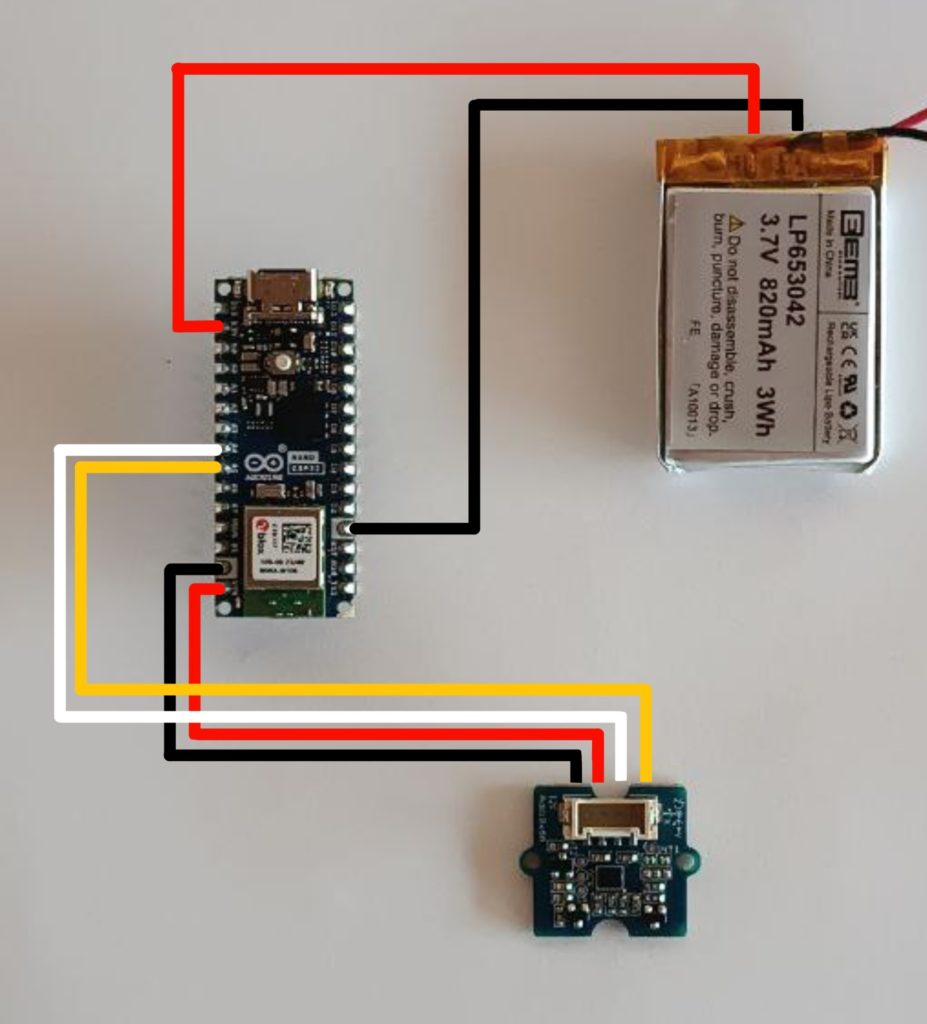

In order to recognize that interaction, each object contains an Arduino Nano ESP32, a small rechargeable battery and an accelerometer. The setup is designed to be as compact as possible to keep the objects relatively small and easy to handle.

The three bases glued with the accelerometer; this allows to keep the horizontal position still

The electronic components inside each objetc: the ESP32, the accelerometer and a battery

The Arduino was programmed in C++, with the main features of the code being Wi-Fi connectivity, reading data from the accelerometer, and sending it to the Supabase database.

In the setup, the Arduino attempts to connect to Wi-Fi for 10 seconds. If successful, it then initializes the HTTP element in the `initializeHttp()` function by generating the connection endpoint, specifically targeting the row with the correct ID, and setting up the headers. At this point, the Arduino is ready to update the data in the Supabase database.

void setup() {

//connect to the wifi

WiFi.begin(ssid, password);

int wifiTimeout = 0;

while (WiFi.status() != WL_CONNECTED && wifiTimeout < 20) {

delay(500);

wifiTimeout++;

}

initializeHttp();

}

void initializeHttp() {

String endpoint = String(supabaseUrl) + "/rest/v1/" + tableName + "?id=eq.1";

http.begin(endpoint);

http.addHeader("Content-Type", "application/json");

http.addHeader("apikey", supabaseKey);

http.addHeader("Authorization", "Bearer " + String(supabaseKey));

http.addHeader("Prefer", "return=representation");

isHttpInitialized = true;

}Inside the loop, two timers are set up: one determines when the sensor reads a value, while the other controls when the data is sent to the database.

This setup ensures a smooth and efficient experience for the Arduino, optimizing both data collection and transmission.

void loop() {

unsigned long currentTime = millis();

if (currentTime - lastSampleTime >= sampleInterval) {

lastSampleTime = currentTime;

sensorReading();

}

if (currentTime - lastSendTime >= sendInterval) {

lastSendTime = currentTime;

sendData();

}

yield();

}

The on-screen interaction

From single to double visualization

From double to triple visualization

From a technical standpoint, another key element is the web application, which is coded in JavaScript.

The app retrieves data from the database and visualizes the photo archive accordingly. Specifically, based on the inclination of multiple objects, the screen splits, and the photos are displayed at different speeds depending on the angle of inclination.

Here is an excerpt of the JavaScript code that manages the connection to the database. It subscribes to database updates and calls the `handleInserts` function to process new data.

database

.channel(tableName)

.on(

"postgres_changes",

{ event: "*", schema: "public", table: tableName },

(payload) => {

handleInserts(payload.new);

}

)

.subscribe();The handleInserts function is the most crucial part of the code. It updates the variables z, c, and g with the new data received from the database. Based on these values, it adjusts the display state of the div containing the photos and then calls the scrollArchive function. Here’s an excerpt:

if (z < 0.98) {

if (displayTimerAgg) {

clearTimeout(displayTimerAgg);

displayTimerAgg = null;

}

document.getElementById("agg").style.display = "block";

if (!scrollTimerAgg || (prevZ >= 0.98 && z < 0.98)) {

if (scrollTimerAgg) {

clearTimeout(scrollTimerAgg);

scrollTimerAgg = null;

}

scrollArchiveAgg();

}

} else {

targetVolumeAgg = 0;

if (!displayTimerAgg) {

displayTimerAgg = setTimeout(() => {

document.getElementById("agg").style.display = "none";

displayTimerAgg = null;

if (scrollTimerAgg) {

clearTimeout(scrollTimerAgg);

scrollTimerAgg = null;

}

}, 5000);

}

}

The second most crucial part of the code is the scrollArchive function, which, depending on the values of z, c, or g, manages the speed at which the images are displayed. Here it is:

function scrollArchiveAgg() {

if (z < 0.98) {

const images = document.querySelectorAll(".agg-archive");

if (images.length === 0) return;

let currentIndex = Array.from(images).findIndex(

(img) => !img.classList.contains("hidden") || img.style.display !== "none"

);

if (currentIndex === -1) currentIndex = 0;

images.forEach((img) => {

img.classList.add("hidden");

img.style.display = "none";

});

const nextIndex = (currentIndex + 1) % images.length;

images[nextIndex].classList.remove("hidden");

images[nextIndex].style.display = "block";

if (scrollTimerAgg) {

clearTimeout(scrollTimerAgg);

}

const zSafe = Math.max(0, Math.min(0.98, z));

const minTimeout = 400;

const maxTimeout = 2000;

const timeout = Math.round(

minTimeout + (zSafe / 0.98) * (maxTimeout - minTimeout)

);

scrollTimerAgg = setTimeout(scrollArchiveAgg, Math.max(minTimeout, timeout));

let volumeParameter = 1 - z;

targetVolumeAgg = volumeParameter > 0 ? Math.max(0, Math.min(1, volumeParameter)) : 0.05;

}

}The sound interaction

The final element of the interaction is sound. Each object is associated with a specific sound: an “Om” yoga chant for the contemplation object; metallic sounds for the aggregation object, evoking mystery; and chanting and marching sounds for the power object, which recall the atmosphere of a ritual.

Contemplation

Aggregation

Power

From a technical standpoint, the sounds are linked to both the scrollArchive and handleInserts functions, which work together to adjust the volume based on the data received from the database.

Specifically, the handleInserts function ensures that the audio volume is set to 0 when the object is still. When the object rotates, the scrollArchive function adjusts the volume based on the values of z, c, or g. The volume change is made smooth thanks to the updateVolumeSmooth() function.

This excerpt of the handleInserts function, already mentioned above, sets the volume to 0 when the z value is above 0.98.

This logic ensures that when the object is nearly stationary, the sound is muted, enhancing the interaction experience:

if (z < 0.98) {

...} else {

targetVolumeAgg = 0;

...}This excerpt from the scrollArchive function sets the volume based on the z value when it is below 0.98.

This code dynamically adjusts the volume as the object rotates, creating a smooth and responsive sound interaction based on the object's movement.

function scrollArchiveAgg() {

if (z < 0.98) {

... // Existing code ...

let volumeParameter = 1 - z;

targetVolumeAgg = volumeParameter > 0 ? Math.max(0, Math.min(1, volumeParameter)) : 0.05;

}

}Finally, this function uses interpolation to ensure the volume changes smoothly:

function updateVolumeSmooth() {

const diff = targetVolume - currentVolume;

const diffAgg = targetVolumeAgg - currentVolumeAgg;

const diffPow = targetVolumePow - currentVolumePow;

if (Math.abs(diff) < 0.05) {

currentVolume = targetVolume;

currentVolumeAgg = targetVolumeAgg;

currentVolumePow = targetVolumePow;

ohmAudio.volume = currentVolume;

metallicAudio.volume = currentVolumeAgg;

mantraAudio.volume = currentVolumePow;

return;

}

currentVolume += diff * 0.2;

currentVolumeAgg += diffAgg * 0.2;

currentVolumePow += diffPow * 0.2;

currentVolume = Math.max(0, Math.min(1, currentVolume));

currentVolumeAgg = Math.max(0, Math.min(1, currentVolumeAgg));

currentVolumePow = Math.max(0, Math.min(1, currentVolumePow));

ohmAudio.volume = currentVolume;

metallicAudio.volume = currentVolumeAgg;

mantraAudio.volume = currentVolumePow;

}

The exhibition

Cementificio Saceba, Morbio Inferiore, Switzerland

We had the opportunity to present our installation as part of the end-of-course exhibition at the Saceba cement factory in Italian Switzerland. The space assigned to us was a circular reinforced concrete room, where we could project the archive images and recreate the experience.

The enclosed nature of the room enhanced the project’s sense of unease and curiosity. Using a projector placed under the table and later concealed with a black cloth, we projected the images onto the wall, while the objects were arranged at the entrance of the room.

References

[1] Anselm Kiefer – “The Seven Heavenly Palaces”: https://pirellihangarbicocca.org/en/anselm-kiefer/

[2] FDD Studio – “EM Table”: https://fddstudio.com/portfolio/em-table/

[3] Wouter Le Duc – “The God Machine”: https://onartandaesthetics.com/2017/07/15/the-god-machine-a-project-on-the-nature-of-cults-by-wouter-le-duc/

[4] Anna Shimshak – “The Religious”: https://www.format.com/magazine/galleries/photography/anna-shimshak-religious-photo-series

[5] Carl De Keyzer – “The Great Passion Play”: https://www.carldekeyzer.com/god-inc-1/zhj863y93rcndt6-e3d6b-c9r99-9ndyt-e6z9x-jamwm-ea796-gly5l-kwsc7-rxz39-kjb8y-et3wk-zrh3a-prxp9-7txmp-xaekz-hxx57-9h53h-bh68h-4adcj-mykka-938rt-gg6nw

[6] Alexandre Del Mar – “Estroboscopio”: https://alexandredelmar.com/acto-vi