Everything He Is

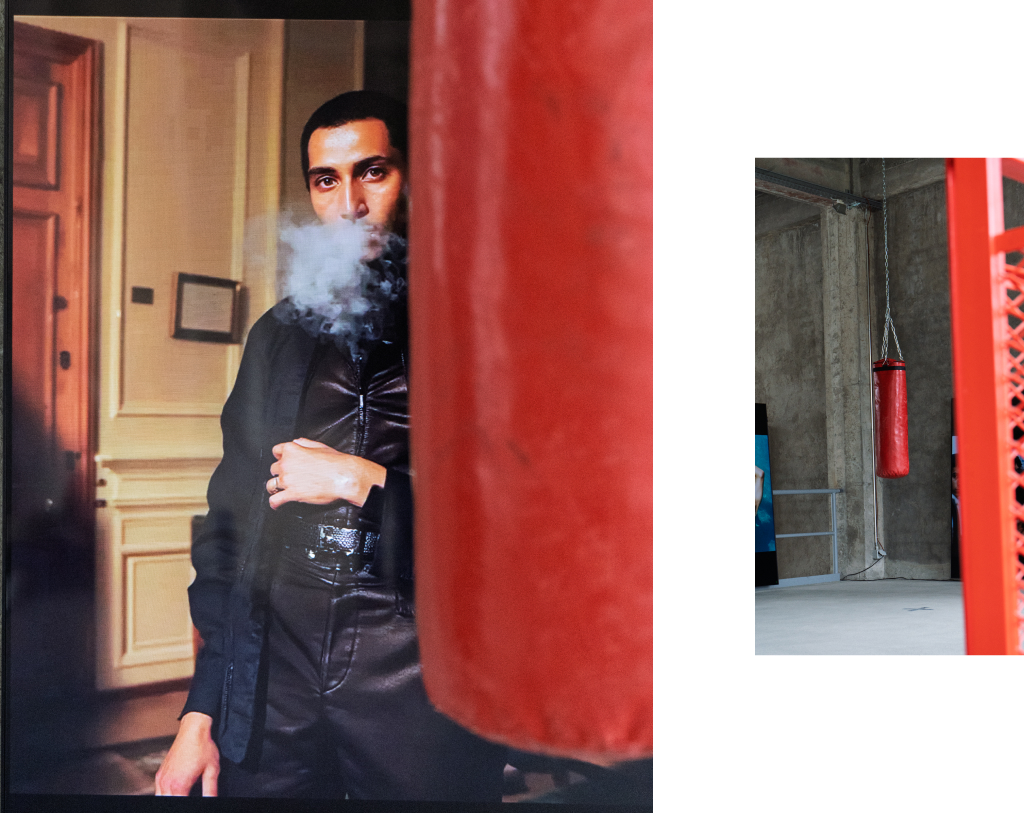

“Everything He Is” is an interactive installation that invites visitors to explore the fluid and multifaceted nature of masculinity. At its center hangs a punching bag – an object traditionally associated with strength, aggression, and resilience. Yet in this space, it becomes a tool for reflection and discovery. As visitors push, pull, or strike the bag, they activate a dynamic archive of 168 AI-generated images that reveal unexpected and intimate portrayals of men—moments of tenderness, vulnerability, care, and emotion.

Video

Selected Images

Concept

Masculinity is not a set of traits, but the sum of every action, every impulse, every moment. It is not something to prove, contain, or define. In this space, it moves.

Everything He Is challenges the rigid expectations placed upon men, revealing the quiet moments of care, intimacy, and emotion often hidden beneath the weight of societal norms—moments that have been reimagined and brought to life through AI-generated imagery.

At the centre of the installation, a punching bag hangs – not as an object of aggression, but as an instrument of exploration. Every push, pull, and strike shifts the archive, revealing, concealing, and reshaping the narrative. The weight, the resistance, the momentum – all echo the forces that shape and challenge masculinity itself.

It asks: What do we expect masculinity to be? Who decides? What happens when we stop trying to shape it? And how does it move when no one is holding it still?

Context

This installation is part of the Designing Spacial Experiences course at SUPSI, led by Leonardo Angelucci in 2025. The brief was to generate an archive of images on a specific theme and create new modes of interaction between the archive, people, and interfaces through hacking objects. The Calura image archive and related story of the rediscovered film archive were generated based on this brief. It was exhibited in March 2025 at SACEBA in Moribo, Switzerland.

The Archive

This archive was never meant to be an archive. What remains are scattered frames — faded prints, overexposed negatives, contact sheets scrawled with half-erased notes. There are no names, no dates, no single photographer to credit. Just a loose constellation of moments, collected from different hands and different times, held together by instinct more than authorship.

The men in these photographs aren’t posed or performing. They’re simply there — slouched on rooftops, sprawled across beds, sitting barefoot in kitchens. Their bodies are captured mid-laugh, mid-smoke, mid-thought. Some frames are soft and accidental, others painfully precise. Together, they form a visual language of masculinity that feels less about identity than about motion — the way a wrist twists in sunlight, how a back curves in conversation, how someone looks when they forget to be seen.

There is intimacy here, but not always comfort. Sometimes the moments feel intruded upon, other times offered freely. Domestic spaces blur with streets, fluorescent bathrooms with quiet dawns. Everything is immediate. Everything is unpolished.

When we began building Everything He Is, this archive became an anchor. Not for answers, but for tension. These images reflect a masculinity that is restless, contradictory, and constantly in flux — a masculinity not defined by traits, but shaped by action, impulse, and presence.

Prototyping

Idea Definition

The project began not with masculinity, but with a broader investigation into photographic archives as cultural memory. We explored collections across a range of themes, geographies, and eras, focusing on image-making as a tool for constructing identity. Over time, our attention narrowed to archives and bodies of work that challenged normative representations of men – work that revealed emotional, contradictory, and vulnerable dimensions of masculinity.

We drew inspiration from photographers like Nan Goldin, Martin Parr, Kamila K. Stanley, and Senta Simond. Their images offered a template for how masculinity could be re-seen – not as a set of traits, but as a spectrum of postures, gazes, and gestures.

“Sonder” by Adam Lin

A deeply personal archive that documents men in moments of quiet reflection, care, and emotional presence.

“Future Perfect” by Maksym Kozlov

A speculative visual archive imagining possible futures, blending realism with dream-like qualities.

"Dancing with Costică" by Jane Long

A reimagined historical archive that blends past and present, breathing new life into old photographs through playful reinterpretation.

Through this research, we noticed a shared visual and conceptual language in the archives that resonated with our early thought process.

Concept Development

After concluding our image archive research, we began to define the conceptual framework of our project. Among the archives we studied, Sonder by Adam Lin had a particularly profound impact on our thinking. The intimate portrayal of men in quiet, reflective moments presented a counter-narrative to traditional masculinity.

Inspired by Sonder, we decided to focus on masculinity not as a fixed identity, but as something fluid, multiple, and continuously becoming. This led us to explore theoretical perspectives that could deepen our understanding of the topic.

“Sonder” by Adam Lin

Image Generation

Dataset curation

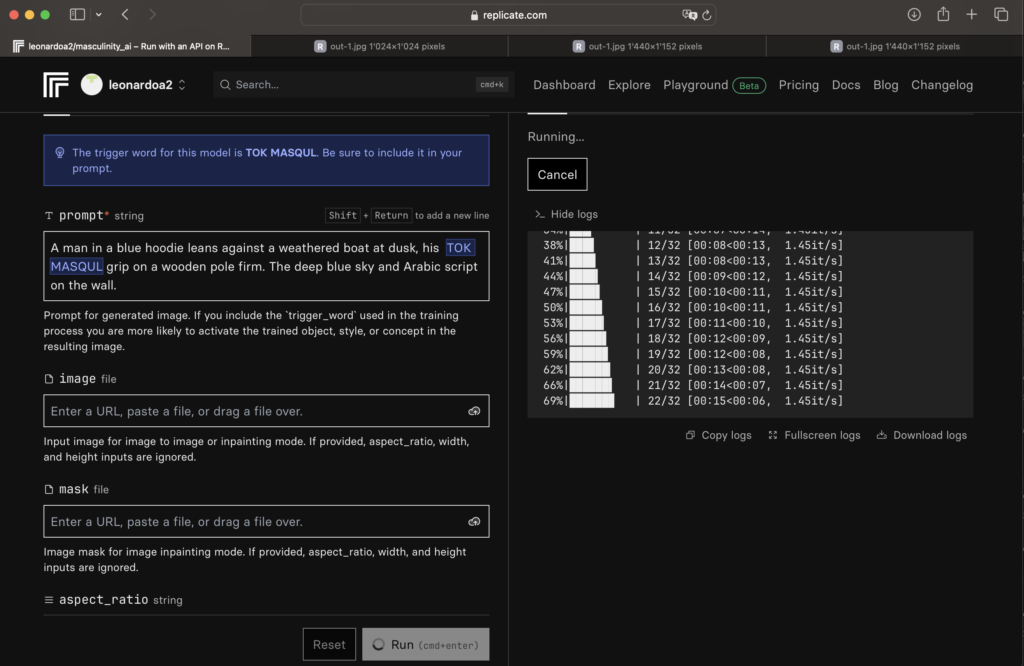

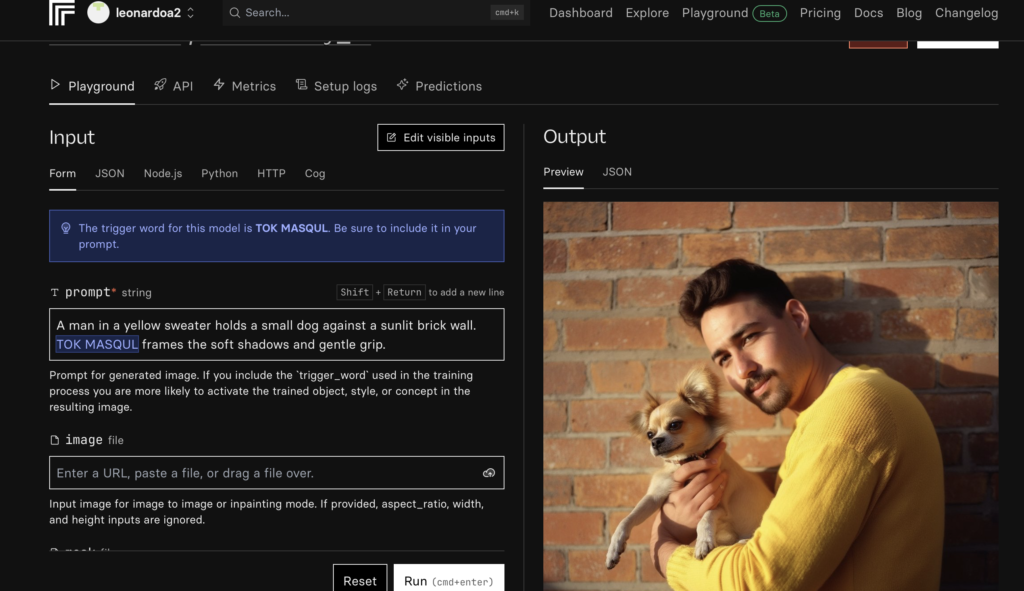

After collecting over 100 matching pictures, we built a dataset of photos that captured the style, content, and mood of the archive we wanted to develop and used these to train an AI image generation model on Replicate. After training the model, each photo was individually generated based on a text prompt that included key stylistic points, such as needing to portray strictly only men, resemble 35mm film photos, etc.

Dataset Selection of 394 images

Using this visual language as reference, we built a dataset of stylistic, compositional, and thematic cues to guide our image generation process.

Training The AI Model

Once we finished curating our dataset, we used Replicate to train an AI model with the images we had collected. After training the model, we began generating images by experimenting with different prompts. This was an iterative process where we learned what worked and what didn’t through trial and error.

One of the key discoveries we made was the importance of adding the Tok-word after the subject description in the prompt—for example, using “man Tok” helped us generate images that better matched the raw, unpolished style we were aiming for. We also found that the more detailed and descriptive the prompt, the more successful the results. On the other hand, specifying things like “limbs” in the prompt often confused the model and led to more mistakes, which is something we had to work around.

Training the model

Replicate Image Generation

Prompt Development

Using this visual language as reference, we built a dataset of stylistic, compositional, and thematic cues to guide our image generation process. We trained a custom AI model on Replicate, using this dataset as a foundation. Each image was generated from a carefully written text prompt referencing:

1. A cinematic, unfiltered collection of images that feel like frames from a messy, underground film – never posed, never clean, never “pretty.” Each image should be visually chaotic, dynamic, immersive, and story-driven.

2. Rooftops at sunrise—someone dangling their feet over the ledge; a subway station flooded with artificial light, a blurry figure stepping onto the train; a corner store at 2AM, wet pavement reflecting flickering neon.

3. Shot on disposable film, raw and imperfect. Grainy, motion blur, slightly overexposed or underexposed. Messy, accidental compositions that feel stolen or candid.

These generated images formed the core archive, later curated for visual and thematic cohesion into a final 168 images.

AI Generated Images

Sound

The 10-minute soundscape was designed to mirror the emotional arc of the archive. It begins with subtle ambient sounds—distant football, conversation, laughter—layered to evoke a sense of place. As it builds, heavier textures like breathing and distortion add tension, leading to a brief but overwhelming wall of sound.

This intensity cuts abruptly to silence. From the quiet, waves and soft beach sounds emerge, offering a sense of calm and release. The track loops, repeating the cycle of build-up and collapse.

All the sound effects and audio layers were created and mixed in Adobe Audition.

Installation Soundtrack

App Prototyping

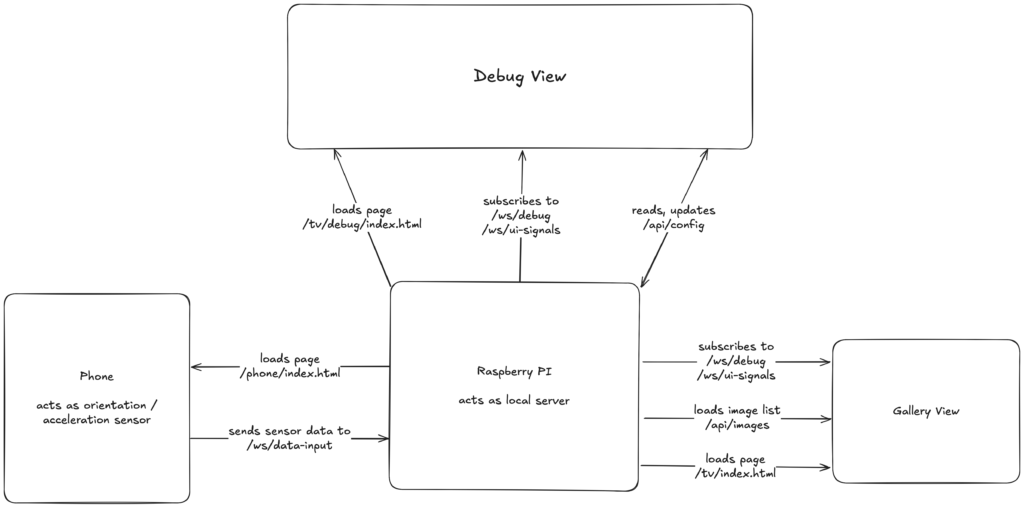

The system is built as a client-server architecture with multiple frontend components and a unified backend server. The core communication between components is handled through WebSocket connections, enabling real-time data streaming and synchronization.

Architecture Overview

The application consists of these main components:

1. Frontend Pages: Multiple web interfaces serving different purposes

2. Backend Server: A Node.js/Express server handling both HTTP and WebSocket connections

Frontend Components

1. Interactive Image Gallery (public/tv/index.html)

Displays images with dynamic scrolling, panning, and zooming.

Responds to real-time acceleration and orientation data.

Synchronized with mobile device movements.

2. Mobile Sensor Interface (public/phone/index.html)

Captures device motion and orientation data.

Utilizes browser DeviceMotion and DeviceOrientation APIs.

Transmits sensor data via WebSocket connection.

3. Debug Dashboard (public/phone/debug.html)

Real-time visualization of device orientation

Displays incoming WebSocket event data

Useful for development and troubleshooting

Backend Components

1. HTTP / WebSocket Server

Serves the frontend components listed above

Manages real-time bidirectional communication, handles client connections and data routing

Processes and broadcasts sensor data

2. Transport Protocols

WebSocket channels for real-time sensor data

HTTP endpoints for static content

Data Flow Architecture

The system follows a publish-subscribe pattern where:

1. Mobile devices act as data publishers, sending sensor data through WebSocket connections

2. The backend server processes and routes this data to appropriate subscribers

3. Display screens subscribe to specific data streams and update their visualizations accordingly

Each component can be configured to work with specific data streams using unique identifiers (sourceIds), allowing for multiple independent control channels to coexist on the same server.

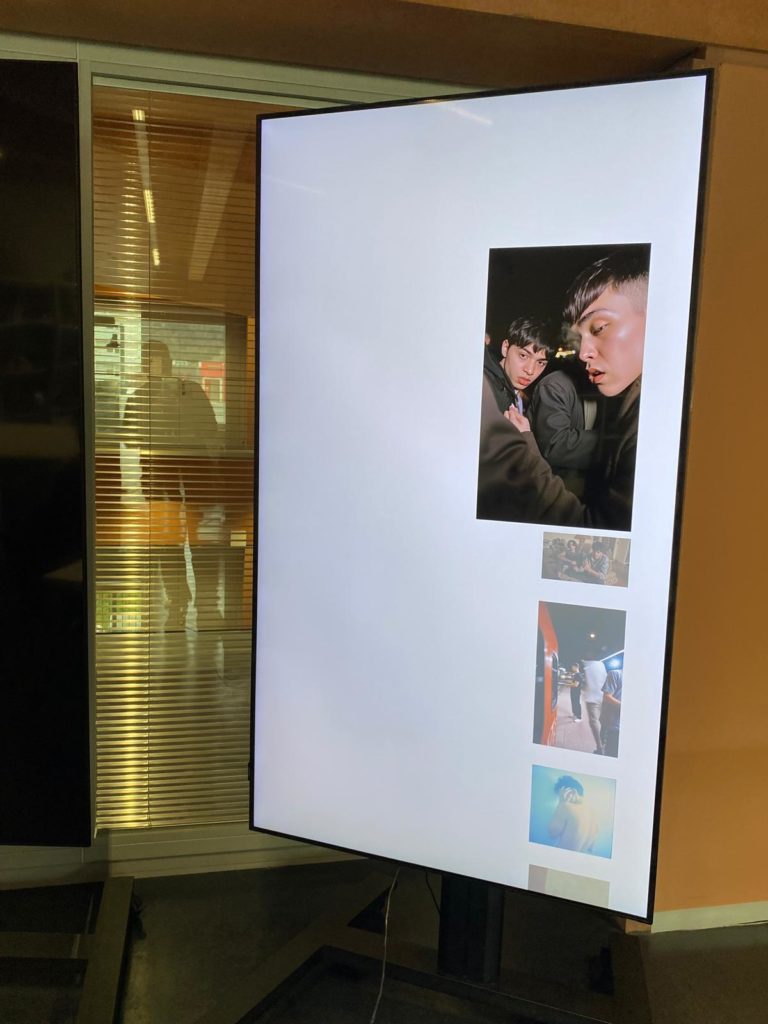

TV interface

The TV interface is a web-based application that displays and responds to device motion data, particularly focused on punch detection and photo display.

The interface implements a robust state synchronization system with automatic reconnection handling and periodic state updates. It maintains consistency across multiple displays through real-time sync status monitoring and on-demand full state synchronization.

The display system features dynamic perspective adjustments and orientation-based zoom effects, implemented through smooth CSS transitions and responsive layout adaptation. The interface processes device orientation data to create immersive visual effects that respond to device movement in real-time.

Setting up the TVs

Message Format

Message format 1.0

// Punch Event

interface PunchEvent {

type: "punch";

intensity: "weak" | "normal" | "strong";

direction: string;

timestamp: number;

syncCounter?: number;

sourceId?: string;

}Message format 2.0

// Sync Event

interface SyncEvent {

type: "sync";

action: "fullSync" | "update";

timestamp: number;

data: {

selectedIndex: number;

seed: number;

totalImages?: number;

};

}Phone Interface

The phone interface is a web-based application that collects device motion and orientation data from mobile devices and sends it to the server via WebSocket connection. It’s designed to be lightweight and responsive, with automatic reconnection handling and data throttling.

The interface is optimized for mobile device usage with all touch interactions (zoom, pan) disabled to prevent accidental navigations or data interruptions. Data is collected and sent to the server every 50ms. The page will attempt to reconnect to the backend if the WS connection is broke

Acceleration Data

interface AccelerationData {

type: "acceleration";

sourceId: string;

timestamp: number;

acceleration: {

x: number; // m/s²

y: number; // m/s²

z: number; // m/s²

};

}Orientation Data

interface OrientationData {

type: "orientation";

sourceId: string;

timestamp: number;

orientation: {

x: number;

y: number;

z: number;

absolute: boolean;

};

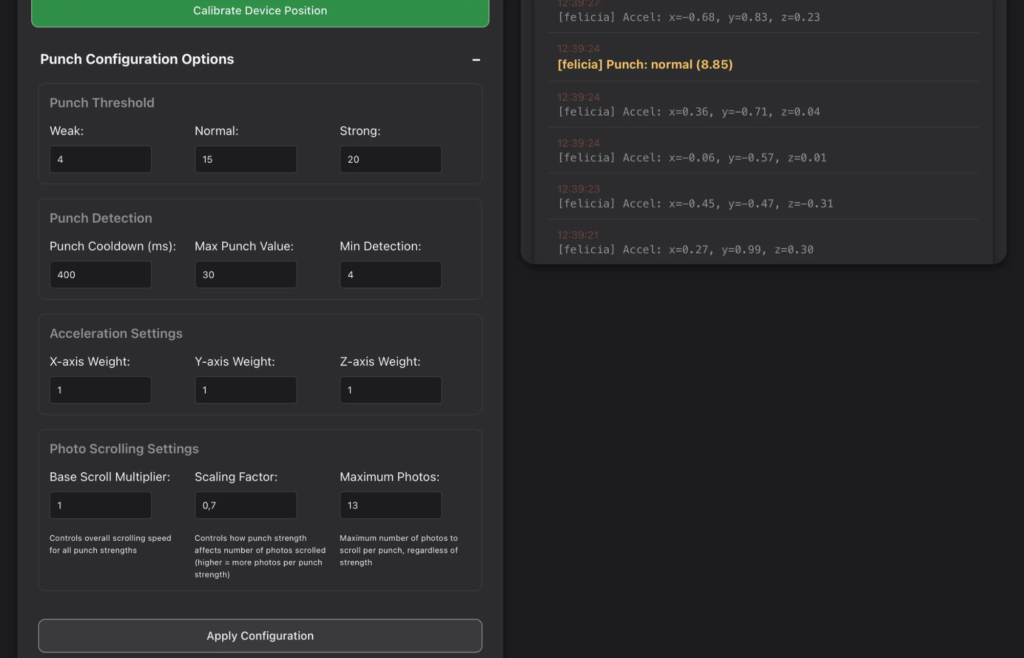

}Debug Interface

The debug interface provides real-time visualisation and monitoring tools for the phone-based motion tracking system.

Features:

1. 3D Phone Orientation Visualization

– Live 3D view of device orientation

– Color-coded axes for movement tracking

– Interactive calibration support

2. Punch Strength Meter

– Real-time acceleration visualization

– Color-coded strength indicators

– Threshold markers for weak/normal/strong

3. Message Logging

– Real-time system message display

– Filterable by message type

– Auto-scrolling with clear option

4. Runtime Configuration

– Live parameter adjustment

– Punch detection settings

– Photo scrolling controls

– Acceleration weights

5. Multi-Device Support

– Source ID selection

– Independent device tracking

– Real-time device switching

Live Debug View

Debug View > Punch Configuration Options

Testing & Iteration

Testing was conducted in both simulated and real environments. Key stages included:

> Sensor Tuning: Filtering out noise, setting thresholds to ignore false positives.

> Image Sync: Ensuring all displays responded identically to punches.

> Punch Cooldown: Preventing rapid-fire punches from skipping too many images.

> Orientation Drift: Accounting for bag motion that wasn’t intentional input.

We refined thresholds through live testing, observing how users interacted naturally. We also logged all sensor values to refine mapping from physical motion to visual effect.

First version of the App testing

UI Design

After setting up the app framework, we focused on the UI design. We started by testing different display ideas, including floating images, zoom effects, and panning animations, using Figma to visualize them.

First version of the App UI

UI Animation

In the end, we chose a simple, static display. This decision was partly due to technical constraints, but mostly because we felt a minimal approach kept the focus on the images themselves, without unnecessary distractions.

User Journey

Users enter a quiet room and encounter the suspended bag. They approach out of curiosity, and their first touch triggers a response on the screens. A light push initiates a gentle image change. A strong punch throws the image off-screen. As the bag sways, the image swells and settles. Visitors step back and watch the rhythm continue – an open loop of motion and response. Additionally, an iPad displays the “Debug View” which provides live readouts of sensor values and system state, aiding in calibration and diagnostics.

Installation Space

Saceba Cement Factory

The installation was set up at the former Saceba Cement Factory in Morbio Inferiore, Switzerland. We placed our installation inside the Torre dei Forni, which is one of the main areas of the factory.

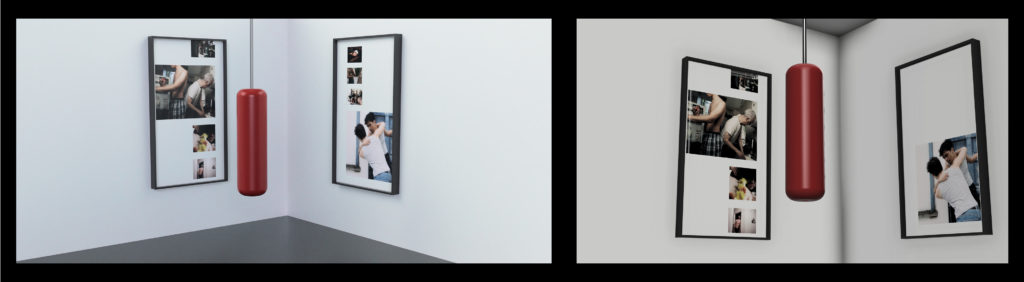

To plan the spatial arrangement of the installation, we created 3D renders to visualise how it would exist in the gallery space. The punching bag – the central point of interaction – is suspended in the middle of the room, with two screens placed on opposite walls in one corner. This positioning was chosen to create a sense of immersion, allowing visitors to engage physically with the punching bag while being surrounded by the images it triggers.

First renders of the installation, Fusion 360

Final Fusion 360 render

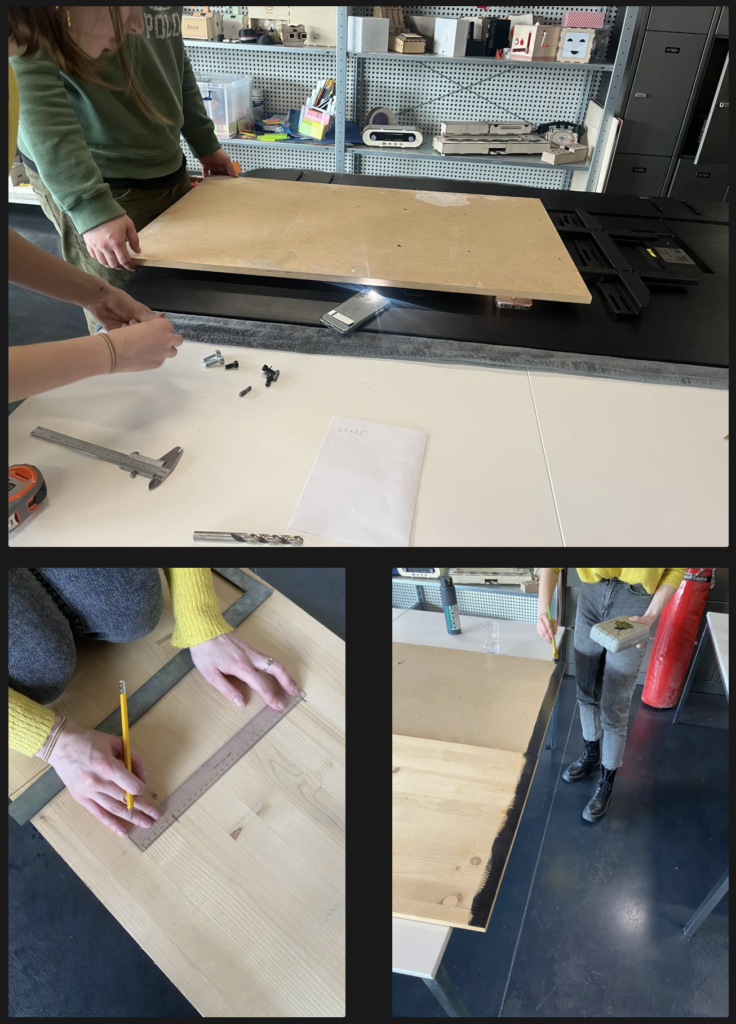

Punching Bag

We wanted the punching bag to visually communicate its history – something worn, used, and aged, rather than new and untouched. To achieve this, we physically distressed the surface by dragging it over concrete, sand, and stones; added tears, scuffs, and layers of chalk dust.

To install the bag, we used a 2-meter-long chain and secured it with a 200 kg-rated carabiner to a ceiling hook in the space. This setup ensured the bag could safely swing with user interaction while staying stable and centered in the room.

Installing the punching bag

Displays

The two screens display the AI-generated images in real time. They are placed on opposite walls in one corner of the room to create a semi-enclosed space around the visitor, enhancing the immersive quality of the interaction. This arrangement encourages the visitor to move with and around the punching bag while remaining engaged with the visuals.

To securely position the screens and protect them during the installation, we built custom wooden stands. These stands provide stability and ensure that the screens remain at an ideal viewing height for visitors while maintaining the clean, minimal aesthetic of the space.

Exhibition at Saceba - Gallery

References

- Photography style: Martin Parr, Nan Goldin, Kamila K. Stanley, Senta Simond

- Sonder by Adam Lin, Photo Archive Inspiration

- Dancing with Costica, Photo Archive Inspiration

- Maksym Kozlov, Photo Archive Inspiration

- Sound: Freesound.org